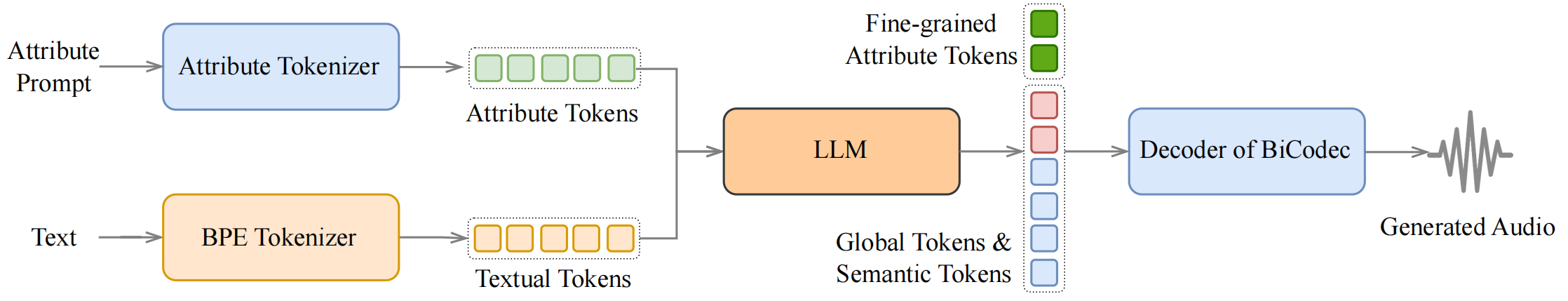

Spark-TTS is an advanced text-to-speech system that uses the power of large language models (LLM) for highly accurate and natural-sounding voice synthesis. It is designed to be efficient, flexible, and powerful for both research and production use.

- Simplicity and Efficiency: Built entirely on Qwen2.5, Spark-TTS eliminates the need for additional generation models like flow matching. Instead of relying on separate models to generate acoustic features, it directly reconstructs audio from the code predicted by the LLM. This approach streamlines the process, improving efficiency and reducing complexity.

- High-Quality Voice Cloning: Supports zero-shot voice cloning, which means it can replicate a speaker's voice even without specific training data for that voice. This is ideal for cross-lingual and code-switching scenarios, allowing for seamless transitions between languages and voices without requiring separate training for each one.

- Bilingual Support: Supports both Chinese and English, and is capable of zero-shot voice cloning for cross-lingual and code-switching scenarios, enabling the model to synthesize speech in multiple languages with high naturalness and accuracy.

- Controllable Speech Generation: Supports creating virtual speakers by adjusting parameters such as gender, pitch, and speaking rate.

[2025-03-04] Our paper on this project has been published! You can read it here: Spark-TTS.

[2025-03-12] Nvidia Triton Inference Serving is now supported. See the Runtime section below for more details.

Clone and Install

Here are instructions for installing on Linux. If you're on Windows, please refer to the Windows Installation Guide.

(Thanks to @AcTePuKc for the detailed Windows instructions!)

- Clone the repo

git clone https://github.com/SparkAudio/Spark-TTS.git cd Spark-TTS

Install Conda: please see https://docs.conda.io/en/latest/miniconda.html- Create Conda env:

conda create -n sparktts -y python=3.12 conda activate sparktts pip install -r requirements.txt # If you are in mainland China, you can set the mirror as follows: pip install -r requirements.txt -i https://mirrors.aliyun.com/pypi/simple/ --trusted-host=mirrors.aliyun.com

Model Download

Download via python:

from huggingface_hub import snapshot_download

snapshot_download("SparkAudio/Spark-TTS-0.5B", local_dir="pretrained_models/Spark-TTS-0.5B")Download via git clone:

mkdir -p pretrained_models # Make sure you have git-lfs installed (https://git-lfs.com) git lfs install git clone https://huggingface.co/SparkAudio/Spark-TTS-0.5B pretrained_models/Spark-TTS-0.5B

Basic UsageYou can simply run the demo with the following commands:

cd example bash infer.sh

Alternatively, you can directly execute the following command in the command line to perform inference:

python -m cli.inference \ --text "text to synthesis." \ --device 0 \ --save_dir "path/to/save/audio" \ --model_dir pretrained_models/Spark-TTS-0.5B \ --prompt_text "transcript of the prompt audio" \ --prompt_speech_path "path/to/prompt_audio"

Web UI Usage

You can start the UI interface by running python webui.py --device 0, which allows you to perform Voice Cloning and Voice Creation. Voice Cloning supports uploading reference audio or directly recording the audio.

- 文章2313

- 用户1336

- 访客11757097

人生苦短,活出精彩。

信鸽推送报错NSObject checkTargetOtherLinkFlagForObjc

信鸽推送报错NSObject checkTargetOtherLinkFlagForObjc 简单利用Clover四叶草安装U盘安装黑苹果

简单利用Clover四叶草安装U盘安装黑苹果 学习使用Java注解

学习使用Java注解 OllyDbg中如何找出B模块中所有调用了A模块的C方法的地方

OllyDbg中如何找出B模块中所有调用了A模块的C方法的地方 解决SSH客户端中文乱码

解决SSH客户端中文乱码 10年后,Android应用程序仍然比iOS应用程序差

10年后,Android应用程序仍然比iOS应用程序差 C++11特性里面的thread

C++11特性里面的thread XPosed微信自动生成二维码

XPosed微信自动生成二维码 解决android studio "found an invalid color"的问题

解决android studio "found an invalid color"的问题 T9社区注册方法【勼适様鲃女尔懟死】

T9社区注册方法【勼适様鲃女尔懟死】 Thinkpad x1 Extreme黑苹果10.14.5安装完成

Thinkpad x1 Extreme黑苹果10.14.5安装完成 基于大白主题增加图片本地化的功能

基于大白主题增加图片本地化的功能 Linux系统查看CPU使用率的几个命令

Linux系统查看CPU使用率的几个命令